A/B Testing with Amazon’s Manage Your Experiments in 2021

When you launch marketing initiatives on Amazon like new photos, infographics, and other content, how do you gauge their effectiveness?

Maybe you made changes right before a holiday, or maybe there was some major news story going on at the time.

Regardless, the data you see is likely skewed and unable to tell you what changes actually made a difference.

Reality is: No matter what’s going on in the world, running one content variation at a time doesn’t give you reliable insights on how effective the content is.

What’s better than testing content variations by themselves is testing them against each other within the same time period. This process is known as A/B split testing. And that’s what Amazon’s Manage Your Experiments tool allows you to do.

In 2019, Amazon launched “Manage Your Experiments.” It allows you to A/B test your A+ Content, product headline, and more recently (early 2021) your main product image.

A/B testing takes some of the burden off your shoulders because you don’t have to decide on one marketing piece; instead, you can run multiple experiments to find out what works.

Sometimes you’ll find the creative you thought was “better” turned out to be a total dud. And if you acted on your judgment alone, you would lose out on sales. A/B testing helps you reduce that error.

Another benefit of the Manage Your Experiments feature is that you get to see what imagery and words are most compelling to your customers.

Even if a tested version doesn’t work against your main version, you can still see how variation “B” compares to another variation “B” and gain insight into what images and words elicit a greater response.

In this article, we’re going to share an overview of Amazon’s Manage Your Experiments tool and some general best practices to get the most out of it.

What is A/B Testing?

Split testing is where you take one version of a product or piece of content and test it against another version. Sometimes you take several versions and you show one version to a segment of visitors, and then the other versions to other segments of visitors at the same time.

Some split testing tools allow you to set the percentage of traffic you want to go to each version, but Amazon doesn’t allow any of that.

Currently, Amazon only allows you to test two versions. The versions are assigned to visitors randomly. Each logged-in visitor will see the same page, which helps reduce skewed results based on repeat page visits.

When you’re A/B testing, you want to allow the test to run for at least four weeks, then analyze the results. From there, you can make changes to your content with the variation that converted the best.

What Variables to Test with Manage Your Experiments?

When you’re setting up a split test, you want to make sure you’re testing one big change at a time. Here are just a few variables you might consider testing:

a. Product Title

- Size – Consider placing your product size toward the front of the title, toward the back of the title, or if the size isn’t a major factor for purchasing, you can test removing it.

- Call Out Target Audience – Consider calling out your target audience in your title, for example “{product name} for Nurses and Health Care Workers.”

- Call Out Use Cases – Consider calling out different use cases in your title, for example “{product name} for Kitchen and Living Room Decorations.”

b. Main Photos

- Angle

- Product Box vs. No Product Box

- Product Layout

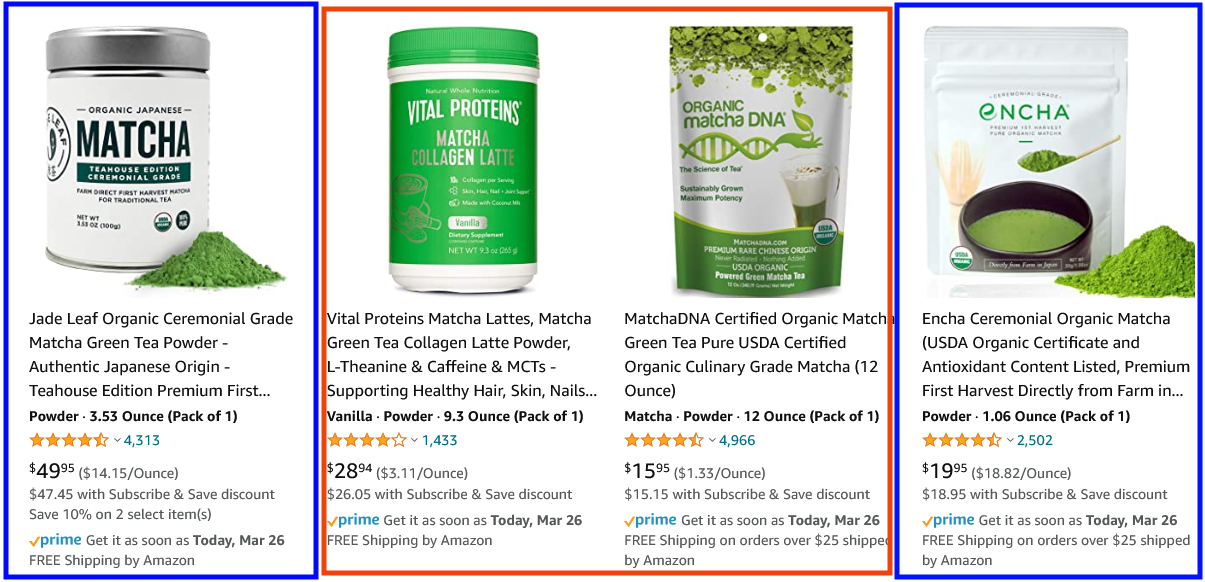

Let’s look at a supplement as an example:

If you were selling a supplement, you might test running the main image with the supplement powder (or capsules) on the image versus the supplement package alone.

You might not think it would affect conversions, but adding the capsules or the powder helps make the product more real.

Besides, many customers judge a supplement based on the color of the powder and/or the size of the capsules. If you make those features prominent—by placing them on your main image—you might drive more page visits and conversions.

c. A+ Content

- Modules – Consider adding graphs, comparison tables, or text vs. image modules to your A+ content.

- Images – You might test using more lifestyle images and less product-based images.

- Change the Emphasis – If you currently place more emphasis on your product’s features, you might add more content that emphasizes the benefits. Or if you focus on one feature of your product, you might expand and highlight some of the other features.

Here’s an example:

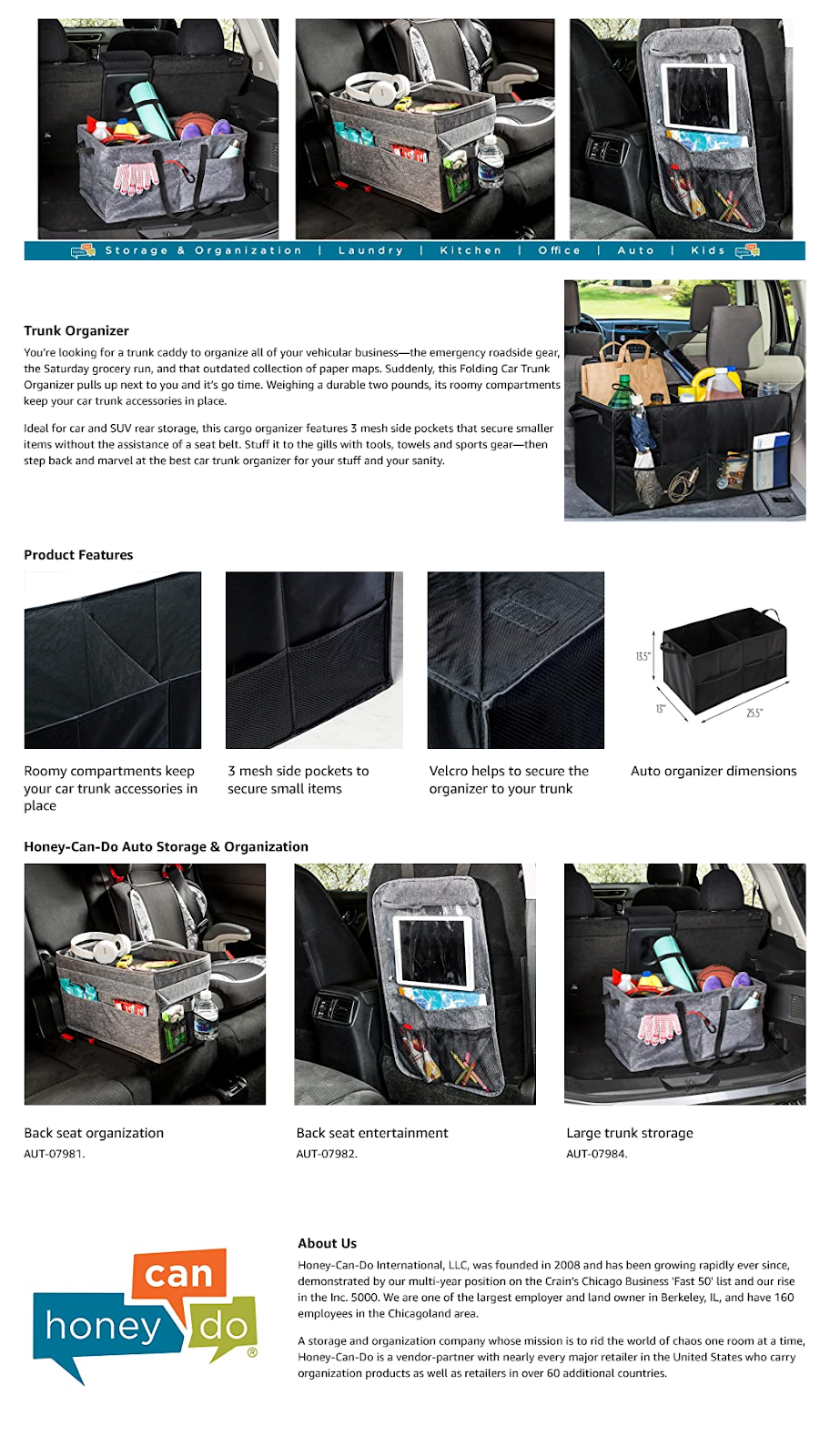

The A+ Content in the first image (Oassar) focuses heavily on features. Each image has a headline that covers the feature, then in the subtext are explanations of the benefits that each feature provides.

The A+ Content in the second image (Honey Can Do) focuses on lifestyle. Most of the images show the product in use. You see the car organizer on the car seat, in the trunk, and on the back of the car seat. The prospective buyer doesn’t have to imagine what using the product would look like.

Now, how might these sellers A/B test using Manage Your Experiments?

Well, the first seller might want to test using lifestyle images in their A+ content, while the second seller might want to test highlighting the features.

How Does Manage Your Experiments Work?

So, now that you have an idea of what you can test with Manage Your Experiments, how do you use the tool?

Well, the first thing you want to do is make sure you are an Amazon brand registered seller on the U.S. marketplace and that you have A+ Content already running.

The reason for this is that Amazon limits your ability to run experiments by only allowing you to run them on “high-traffic ASINs.”

When you have A+ content up and running for a while, that’s how Amazon will determine if your ASIN has a high enough traffic volume to run a statistically significant experiment. They don’t tell you what their specific standards are, but apparently, they vary by category.

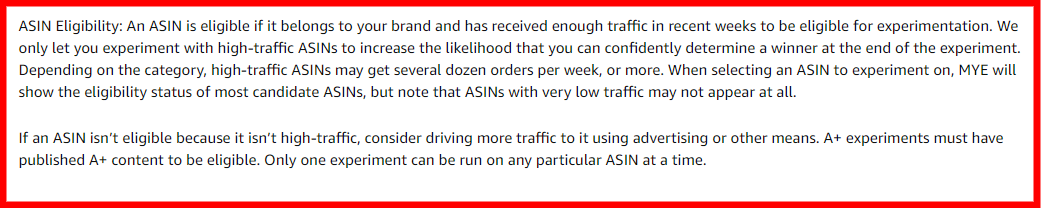

Here’s what Amazon has to say:

Since the requirements for a “high-traffic ASIN” are so vague, how do you determine which of your ASINs are eligible?

You can find out if your ASINs are eligible by visiting the Manage Your Experiments page and attempting to “Create A New Experiment.” You’ll see your eligible ASINs—if you have any.

Once you have eligible ASINs, you’ll want to determine which variable you want to test first. Create that new variation and upload it to Manage Your Experiments.

Amazon says submissions can take up to 7 business days, but you’ll usually see approval or disapproval within 48 hours.

After you’re approved, it’s time to set up and schedule your A/B test in Manage My Experiments.

You can run experiments for 4, 6, 8, or 10 weeks. Amazon recommends 8 – 10 weeks. But don’t worry: You can adjust the schedule or turn off the test while it’s running. After about 1 – 2 weeks, you’ll start to see data.

When your experiment ends, Amazon gives you insights like which variation was most effective, how confident you should be with the results, and the estimated 1-year impact a piece of content will have on sales.

If the new version doesn’t convert at a higher rate, then keep your current content. Otherwise, if a new version converts higher, you can decide if you want to make it your primary content and test another version against it.

Wrapping Up

Amazon tends to hold data close to their chest, so any little headway they give us to learn more about our customers and optimize our marketing, the better.

It definitely takes time and patience to run A/B split tests, but the optimization is worth it if you plan on dominating your market. Be sure to make one big change at a time so you don’t skew the data. And give each experiment enough time to run.

Happy Selling,

The Page.One Team

The Last Word:

Amazon has hinted at plans of offering A/B testing for your bullet points and secondary images; so, you’ll want to keep an eye out for when/if they launch those features.

Make sure you’re running A+ Content now and you’re driving traffic to your listings so that the listings have enough traffic to be eligible to run those experiments when Amazon finally launches them.